Table of Contents

Authors

The list of book contributors is presented below.

Tallinn University of Technology (TalTech)

- Rahul Razdan, Ph.D

- Mohsen Malayjerdi, Ph.D

Silesian University of Technology

- Roman Czyba, Ph. D., DSc., Eng.

- Piotr Czekalski, Ph. D., Eng.

- Tomasz Grzejszczak, Ph. D., Eng.

Riga Technical University

- Agris Nikitenko, Ph. D., Eng.

- Karlis Berkolds, M. sc., Eng.

- Larisa Survilo, M. sc., Eng.

ITT Group

- Raivo Sell, Ph. D., ING-PAED IGIP

ProDron

- Tomasz Siwy, CEO

Czech Technical University

- Ing. Libor Přeučil, CSc. (Ing. = Master of Engineering, CSc. = Ph. D.)

- Ing. Karel Košnar, Ph.D. (Ing. = Master of Engineering)

Technical Editor

- Raivo Sell, Ph. D., ING-PAED IGIP

External Contributors

Reviewers

Project Information

This content was implemented under the project: SafeAV - Harmonizations of Autonomous Vehicle Safety Validation and Verification for Higher Education.

Project number: 2024-1-EE01-KA220-HED-000245441.

Consortium

- ITT Group, Tallinn, Estonia (Coordinator).

- Silesian University of Technology, Gliwice, Poland,

- Riga Technical University, Riga, Latvia,

- Czech Technical University in Prague, Prague, Czech Republic

- Tallinn University of Technology, Tallinn, Estonia

- Prodron, Gliwice, Poland

Erasmus+ Disclaimer

This project has been funded with support from the European Commission.

This publication reflects the views only of the author, and the Commission cannot be held responsible for any use which may be made of the information contained therein.

Copyright Notice

This content was created by the SafeAV Consortium 2024–2027.

The content is copyrighted and distributed under CC BY-NC Creative Commons Licence and is free for non-commercial use.

Introduction

Electronics design trends have revolutionized society. The start was with centralized computing led by firms like IBM and DEC. These technologies enhanced productivity for global business operations, significantly impacting finance, HR, and administrative functions, eliminating the need for extensive paperwork. The next wave in economy shaping technologies consisted of edge computing devices (red in Figure below) such as personal computers, cell phones, and tablets. With this capability, companies such as Apple, Amazon, Facebook, Google, and others could add enormous productivity to the advertising and distribution functions for global business. Suddenly, one could directly reach any customer anywhere in the world. This mega-trend has fundamentally disrupted markets such as education (online), retail (ecommerce), entertainment (streaming), commercial real estate (virtualization), health (telemedicine), and more. The next wave of electronics is the dynamic integration of artificial intelligence with physical assets, and apex of this capability is autonomy.

Autonomy research traces its lineage to mid-20th-century cybernetics and control theory, where researchers like Norbert Wiener, Ross Ashby, and early robotics pioneers explored how machines could sense, process feedback, and act purposefully. The 1960s–1980s brought key breakthroughs: Shakey the Robot at SRI demonstrated integrated perception, planning, and action; DARPA’s Autonomous Land Vehicle project pushed early computer vision and navigation; and advances in probabilistic robotics—such as Kalman filtering, Bayesian estimation, and SLAM—formalized how autonomous systems make decisions under uncertainty. During this period, autonomy was largely rule-based and dominated by deterministic control, limited sensing, and narrow computational capabilities.

Modern autonomy began accelerating in the 1990s and 2000s with increased computing power, the rise of machine learning, and large-scale government programs. The DARPA Grand Challenges (2004–2007) marked a turning point, proving that self-driving vehicles could handle complex, unstructured environments and catalyzing both academic and commercial investment. The 2010s saw deep learning revolutionize perception, enabling robust object detection, scene understanding, and end-to-end control. This expanded autonomy from traditional robotics to autonomous systems in the ground, maritime, airborne, and space contexts.

Given the massive amount of research, several books have been written on autonomy. For example, Introduction to Autonomous Robots provides a comprehensive and accessible foundation for designing autonomous systems, covering the essential building blocks such as robot mechanisms, sensing modalities, actuation, perception, localization, mapping, and planning. It is widely used in university courses because it blends theory with practical algorithms, offering clear explanations of how autonomous robots interpret their environment and make decisions. Distributed Autonomous Robotic Systems, by contrast, focuses on the challenges and architectures of multi-robot and swarm systems, exploring decentralized control, coordination, communication, and robustness in distributed environments. Together, these two books span the spectrum from single-robot autonomy to collaborative, multi-agent systems, giving readers a solid grasp of both foundational robotics and the complexities of distributed autonomy.

In contrast to existing literature, this book focuses on the innovations required for a core design to be integrated into the governing systems in society. This process is especially challenging for autonomous systems because they integrate four broad domains which have traditionally not interacted with each other:

- Legal and regulatory structures which implicitly have assumed human actors.

- Traditional mechanically focused safety protocols for cyber-physical systems.

- Traditional software product development flows.

- New artificial intelligence-based algorithms which replace the “driver” for autonomy.

The remainder of this book is organized as follows. Chapter 2 provides a high-level introduction to autonomous systems, including the underlying technologies and their interaction with regulatory, safety, and standards environments. Chapter 3 examines hardware architectures, with particular emphasis on sensors, high-performance computing platforms, and emerging challenges in hardware supply chains. Chapter 4 focuses on software architecture, including real-time execution, safety-critical software development, and the growing importance of stable and secure software supply chains. Chapter 5 explores higher-level autonomy algorithms for perception, mapping, and localization, with a focus on system safety and reliability. Chapter 6 addresses planning, control, and decision-making, examining how autonomous systems translate perception into safe and effective action. Finally, Chapter 7 examines communication between autonomous systems, humans, and infrastructure—including human–machine interfaces (HMI) and vehicle-to-everything (V2X) communication—with an emphasis on integrated system safety and operational robustness.

Autonomous Systems

Autonomous systems use sensors (e.g. cameras, radars, ultrasonic sensors) to collect information about the environment. The collected data are processed, and decisions regarding further action are made on their basis. What exactly is autonomy? The autonomy of a system can be defined as its ability to act according to its own goals, norms, internal states, and knowledge, without external human intervention. This means that autonomous systems are not limited to robots or unmanned vehicles. This definition includes any automatic functions that can reduce the level of workload or support the person driving the vehicle.

Autonomous systems use advanced technologies such as artificial intelligence, machine learning, neural networks, Internet of Things, and others to perform tasks independently. Autonomous systems are today's Industry 4.0 and are used in various areas, from robotics, through transport and logistics, to medicine and education. An example would be an autonomous car that makes decisions on its own based on data from sensors, or an autonomous transport vehicle (AGV, or Automated Guided Vehicles) designed to safely and efficiently transport loads in a warehouse, without the need for operator supervision. Another application of autonomous systems are production systems that, based on data from industrial sensors, automatically control production processes, control machines and optimize production. This allows for shortening production times, reducing production costs and increasing product quality. Autonomous systems are also used in transport and logistics, where they enable faster and more efficient delivery of goods. Thanks to the Internet of Things and monitoring systems, every stage of transport can be tracked, from loading to delivery, which allows for better control of the process. Autonomous systems are becoming an increasingly important part of our lives, and their development and application will have an increasing impact on the future.

Autonomous systems operate in fundamentally different physical environments across ground, marine, airborne, and space domains, and these environmental differences strongly influence system design, sensing, safety, and operational architecture. Ground systems operate in highly structured but unpredictable environments with dense obstacles, human interaction, and high-bandwidth connectivity, requiring real-time perception, fast reaction times, and robust human safety assurance. Marine systems operate in less structured but slower-moving three-dimensional environments with fewer obstacles, limited connectivity, and strong environmental disturbances such as waves, currents, and corrosion, placing greater emphasis on long-duration reliability, navigation robustness, and remote supervision. Airborne systems operate in three-dimensional, safety-critical environments governed by strict airspace control, requiring extremely high reliability, precise navigation, fault tolerance, and formal certification due to the severe consequences of failure. Space systems operate in the most extreme and isolated environment, characterized by radiation exposure, vacuum, extreme temperature variation, and long communication delays, making real-time human intervention impossible and requiring systems to be highly autonomous, fault-tolerant, and capable of operating independently for extended periods. As a result, autonomy architectures, safety requirements, sensing modalities, and verification approaches vary significantly across these domains, even though they share common underlying principles of perception, decision-making, and control.

Overall, autonomy is a transformational technology which will drive economic processes which will transform society. In order to be effective, autonomy must integrate with the critical elements of society, and the rest of this chapter will discuss these in more detail.

Definitions, Classification, and Levels of Autonomy

Intuitively, autonomy of unmanned systems refers to their ability to self-manage, make decisions, and complete tasks with minimal or no human intervention. To collaborate with other systems or humans, autonomy requires a clear system definition. This definition not only communicates function to partners and users, but also sets an expectation function. Expectation functions are central to many technical (validation), governance (licensing), and legal (liability) processes. Each of the physical domains have built somewhat similar “levels” of autonomy which start setting expectation functions.

Levels of Ground Vehicle Autonomy

For ground vehicles, in 2014, the American organization Society of Automotive Engineers (SAE) International adopted a classification of six levels of autonomous driving, which was subsequently modified in 2016. Based on a decision by the National Highway Traffic Safety Administration (NHTSA), this is the officially applicable standardization in the United States, which is also the most popular in studies on autonomous driving technologies in Europe.

To clarify the situation, SAE International has defined 5 levels of automation for autonomous vehicles, which have been adopted as an industry standard (see Figure 2).

- Level 0: The driver has full control of the vehicle and there are no automated systems.

- Level 1: Also known as “hands-on,” the driver controls all standard driving functions, such as steering, acceleration, braking, and parking. Some automated systems, such as cruise control, parking assist, and lane-keeping assist, will be built into the car. The driver must monitor their surroundings and be able to take full control at any time.

- Level 2: “Hands-free” automation means that the automated system can take full control of the vehicle, steering, accelerating, and braking. However, the driver must be ready to take control of the vehicle if necessary. The “hands-free” principle shouldn't be taken literally, and the SAE recommends that the hands remain in contact with the steering wheel to confirm that the driver is ready to take control.

- Level 3: Level 3 is referred to as “without looking into the eyes” automation. The driver can focus on activities other than driving, such as using a phone or watching a movie. The automated system will be able to respond to situations requiring immediate action, such as emergency braking, but the driver will still need to intervene if notified by the technology.

- Level 4: The next level is “mind-off” automation. It's essentially similar to Level 3 in that the driver doesn't need to monitor their surroundings. In fact, they can fall asleep, as driver intervention isn't required, even in emergency situations. However, this level of autonomy is only supported in limited areas or under specific circumstances, such as traffic jams.

- Level 5: Level 5 means “steering wheel optional.” The car is fully autonomous and requires no human intervention.

Today, these levels have become the shorthand to communicate expectations and the object of regulatory and legal battles.

Levels of Airborne Autonomy

In general, autonomy or autonomous capability is defined in the context of decision-making or self-governance within a system. According to the Aerospace Technology Institute (ATI), autonomous systems can essentially decide independently how to achieve mission objectives, without human intervention [2]. These systems are also capable of learning and adapting to changing operating environment conditions. However, autonomy may depend on the design, functions, and specifics of the mission or system [3]. Autonomy can be broadly viewed as a spectrum of capabilities, from zero autonomy to full autonomy. The Pilot Authorization and Task Control (PACT) model assigns authorization levels, from level 0 (full pilot authority) to level 5 (full system autonomy), also used in the automotive industry for autonomous vehicles (see Figure 3).

Levels of autonomy in drone technology are typically divided into five distinct levels, each representing a gradual increase in the drone's ability to operate independently.

- Level 0: The pilot has complete control over every movement. The platforms are always 100% manually controlled.

- Level 1 – Remote Control: The pilot retains control of overall operations and vehicle safety. However, the drone can take over one or more essential functions for a specified period. While the pilot does not have continuous control of the vehicle and never simultaneously controls speed and direction, it can assist with navigation and/or maintain altitude and position. The drone is supported by GNSS for flight stabilization, and all inputs regarding direction, altitude, and speed are entered manually. Obstacle detection functions are available at this level, but avoidance is performed manually by the pilot.

- Level 2 - Automated Flight Control (Assisted Autonomy): A pre-programmed flight path is transmitted to the autopilot, and the drone begins its mission, flying along waypoints. The pilot is still responsible for the safe control of the vehicle and must be ready to take control of the drone if an unexpected event occurs. However, under certain conditions, the drone itself can take control of the drone’s heading, altitude, and speed. The pilot still has full control, including monitoring airspace, flight conditions, and responding to emergencies. Most manufacturers currently build drones at this level, where the platform can assist with navigation functions and allow the pilot to disengage from certain tasks.

- Level 3 - Partial Autonomy (Semi-Autonomous): Similar to Level 2, the drone can fly autonomously, but the pilot must be alert and ready to take control at any time. The drone notifies the pilot of the need for intervention, acting as an emergency system. This level means that the drone can perform all functions “under certain conditions.”

- Level 4 - Cognitive Autonomy (Advanced Semi-Autonomous): A drone can be controlled by a human, but it doesn't always have to be. Under the right conditions, it can fly autonomously at all times. It's expected that the drone will have redundant systems so that if one system fails, it will continue to operate. At this level of autonomy, the “sense and avoid” function becomes “sense and navigate.” This means that the drone detects obstacles along its flight path and actively avoids contact by changing its flight trajectory.

- Level 5 – Full Autonomy: The drone controls itself independently in any situation, without the need for human intervention. This includes full automation of all flight tasks in all conditions. Currently, such drones do not yet exist. However, it is expected that in the near future, they will be able to utilize artificial intelligence tools for flight planning—in other words, autonomous learning systems with the ability to modify routine behavior.

Another general but useful model describing autonomy levels in unmanned systems is the Autonomy Levels for Unmanned Systems (ALFUS) model [5]. European Union Aviation Safety Agency (EASA), in one of its technical reports, provided some information on autonomy levels and guidelines for human-autonomy interactions. According to EASA, the concept of autonomy, its levels, and human-autonomous system interactions are not established and remain actively discussed in various areas (including aviation), as there is currently no common understanding of these terms [6]. Since these concepts are still somewhat developmental, this becomes a huge challenge for the unmanned aircraft regulatory environment as they remain largely unestablished.

The classification of autonomy levels in multi-drone systems is somewhat different. In multi-drone systems, several drones cooperate to perform a specific task. Designing multi-drone systems requires that individual drones have an increased level of autonomy. The classification of autonomy levels is directly related to the division into flights performed within the pilot's or observer's line of sight (VLOS) and flights performed beyond the pilot's line of sight (BVLOS), where particular attention is paid to flight safety. One way to address the autonomy issue is to classify the autonomy of drones and multi-drone systems into levels related to the hierarchy of tasks performed [7]. These levels will have standard definitions and protocols that will guide technology development and regulatory oversight. For single-drone autonomy models, two distinct levels are proposed: the vehicle control layer (Level 1) and the mission control layer (Level 2), see Figure 5. Multi-drone systems, on the other hand, have three levels: single-vehicle control (Level 1), multi-vehicle control (Level 2), and mission control (Level 3). In this hierarchical structure, Level 3 has the lowest priority and can be overridden by Levels 2 or 1.

Marine autonomy (IMO MASS levels) and Space autonomy (NASA ALFUS framework)

For marine systems, the International Maritime Organization (IMO) defines autonomy through its Maritime Autonomous Surface Ship (MASS) framework, which describes four progressive levels of autonomy based on the degree of human involvement and onboard decision-making capability. At lower levels, ships use automation primarily to assist human crews with navigation, propulsion, and safety monitoring, while humans remain onboard and responsible for operational decisions. Intermediate levels allow remote operation, where ships may operate without onboard crew but are supervised and controlled from shore-based control centers. At the highest level, fully autonomous vessels can perceive their environment, make navigation and mission decisions independently, and execute those decisions without human intervention. This framework reflects the operational realities of maritime missions, where long durations, predictable dynamics, and remote monitoring make gradual progression toward autonomy feasible.

In space systems, autonomy is commonly described using NASA’s Autonomy Levels for Unmanned Systems (ALFUS) framework, which evaluates autonomy based on the system’s independence from human control, its ability to handle environmental complexity, and its capacity to accomplish mission objectives without intervention. At lower levels, spacecraft rely heavily on ground operators for command and control, executing predefined instructions with minimal onboard decision-making. As autonomy increases, spacecraft gain the ability to perform functions such as fault detection and recovery, autonomous navigation, and adaptive mission planning. At the highest levels, systems can independently perceive their environment, evaluate mission goals, and dynamically adjust their behavior to achieve objectives without real-time human guidance. This progression is particularly important in deep-space missions, where communication delays make continuous human control impractical.

Why marine and space autonomy frameworks differ from ground autonomy:

Marine and space autonomy frameworks differ fundamentally from ground autonomy because their operational constraints emphasize endurance, remote operation, and system resilience rather than continuous interaction with humans in dense, unpredictable environments. Ground vehicles must operate safely in close proximity to human drivers, pedestrians, and complex infrastructure, requiring highly responsive real-time perception and decision-making. In contrast, marine systems operate in relatively structured environments with fewer immediate hazards, allowing autonomy to focus more on navigation efficiency and remote supervision. Space systems present even greater challenges, including extreme communication latency, harsh environmental conditions, and the impossibility of real-time human intervention, requiring spacecraft to autonomously detect faults, maintain operational health, and ensure mission survival. As a result, autonomy in marine and space systems is driven more by operational independence and mission continuity than by immediate human safety interactions. The table below provides a summary of all four domains.

| Unified Level | Ground (SAE J3016) | Airborne (NASA / UAV / DoD) | Marine (IMO MASS / DNV) | Space (NASA ALFUS) | Description |

|---|---|---|---|---|---|

| Level 0 | Level 0 – No automation | Manual flight | AL 0 – Manual ship | ALFUS 0 – Manual | Human performs all sensing, planning, and control |

| Level 1 | Level 1 – Driver assistance | Basic autopilot (e.g., altitude hold, heading hold) | MASS 1 – Decision support | ALFUS 1 – Teleoperation assist | Automation assists human but does not replace decision-making |

| Level 2 | Level 2 – Partial automation | Automated flight execution with supervision | MASS 2 – Remotely controlled with crew onboard | ALFUS 2 – Automated execution | System performs control functions but human supervises continuously |

| Level 3 | Level 3 – Conditional automation | Supervisory autonomy | MASS 3 – Remotely controlled without crew | ALFUS 3 – Supervisory autonomy | System performs mission tasks but human intervenes when needed |

| Level 4 | Level 4 – High automation | High autonomy UAV | MASS 4 – Fully autonomous ship | ALFUS 4–5 – High autonomy spacecraft | System operates independently in defined environments |

| Level 5 | Level 5 – Full automation | Fully autonomous UAV | Fully autonomous ship (advanced DNV AL 4+) | ALFUS 6 – Full autonomy | System operates independently in all environments |

The classification of autonomy into structured levels is not merely a technical taxonomy; it serves as a foundational construct for legal responsibility, regulatory approval, and ethical governance. These autonomy levels define an expectation function, which specifies who (human or machine) is responsible for sensing, decision-making, and action execution under defined operational conditions. This expectation function becomes the basis for certification, validation, liability assignment, and operational authorization which we will discuss in the next section.

Legal, Ethical, and Regulatory Frameworks

In society, products operate within the confines of a legal governance structure. The legal governance structure is one of the great inventions of civilization and its primary role is to funnel disputes from unstructured expression and perhaps even violence to the domain of courts (figure 1). To be effective, legal governance structures must be perceived as fair and predictable. The objective of fairness is obtained by a number of methods such as due process procedures, transparency and public proceedings, and Neutral decision-makers (judges, juries, arbitrators). The objective of predictability is achieved by the use of the concept of precedence. Precedence is the idea that past rulings are given heavier weight relative to decision making, and it is an extraordinary event to diverge from precedence. Precedence gives the legal system stability. The combination of fairness and predictability shifts the dispensation of disputes to a more orderly process which promotes societal stability.

How does this mechanically work and how does this connect to product development ?

As shown in figure 2, there are three major stages. First, legal frameworks are established by law-making bodies (legislators). However, in practice, legislators cannot specify all aspects and empower administrative entities (regulators) to codify the details of law. Finally, regulators often do not have the technical knowledge to codify all aspects of the law and rely on independent industry groups such as Society for Automotive Engineering (SAE) or Institute of Electrical and Electronics Engineers (IEEE) for technical knowledge. Second, in the field, disputes arise and must be adjudicated by the legal system. The typical process is a trial, under the strict processes established for fairness. The result of the trial is to apply the facts to the legal frameworks and apply a judgement. The facts of the case can result in three potential outcomes. In the first situation, the facts are covered by the legal framework, so there is no further action relative to the governance structure. In the second case, the facts expose an “edge” condition in the governance structure. In this situation, the court looks for previous cases which might fit (the concept of precedence) and uses that to make its judgement. If such a case does not exist, the court can establish precedence with its judgement in this case. This has the effect of weighing the future decisions as well. Finally, in rare situations, the facts of the case are in a field which is so new that there is not much in the way of body of law. In these situation, the courts may make a judgement, but often there is a call for law-making bodies to establish deeper legal frameworks.

In fact, autonomous vehicles (AVs) are considered to be one of these situations. Why ? In traditional automobiles, the body of law connected to product liability is connected to the car, and the liability of actions using the car is connected to the driver. Further, Product liability is often managed at the federal level and driver licensing more locally. However, surprisingly, as the figure below shows, there is a body of law dealing with autonomous vehicles from the distant past. In the days of horses, there were accidents, and a sophisticated liability structure emerged. In this structure, there was a concept that if a person directed his horse into an accident, then the driver was at fault. However, if a bystander did something to “spook” the horse, it was the bystander's fault. Finally, there was also the concept of “no-fault” when a horse unexpectedly went rogue. A discerning reader may well understand that this body of law emerges from a deep understanding of the characteristics of a horse. In legal terms, it creates an “expectation.' What are the “expectations” for a modern autonomous vehicle ? This is currently a highly debated point in the industry.

Overall, whatever value products provide to their consumers is weighed against the potential harm caused by the product, and leads to the concept of legal product liability. While laws diverge across various geographies, the fundamental tenets have key elements of expectation and harm. Expectation as judged by “reasonable behavior given a totality of the facts” attaches liability. As an example, the clear expectation is that if you stand in front of a train, it cannot stop instantly while this is not the expectation for most autonomous driving situations. Harm is another key concept where AI recommendation systems for movies are not held to the same standards as autonomous vehicles. The governance framework for liability is mechanically developed through legislative actions and associated regulations. The framework is tested in the court system under the particular circumstances or facts of the case. To provide stability to the system, the database of cases and decisions are viewed as a whole under the concept of precedence. Clarification on legal points is set by the appellate legal system where arguments on the application of the law are decided what sets precedence.

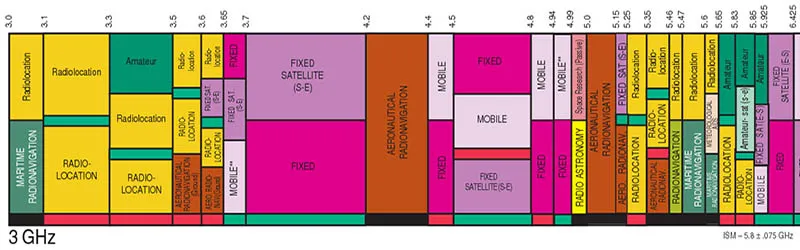

What is an example of this whole situation ? Consider the airborne space with the figure above where the governance framework consists of enacted law (in this case US) with associated cases providing legal precedence, regulations, and industry standards. Any product in the airborne sector, must be compliant to release their solution to the marketplace.

Ref:

- Razdan, R., (2019) “Unsettled Technology Areas in Autonomous Vehicle Test and Validation,” Jun. 12, 2019, EPR2019001.

- Razdan, R., (2019) “Unsettled Topics Concerning Automated Driving Systems and the Transportation Ecosystem,” Nov 5, 2019, EPR2019005.

- Ross, K. Product Liability Law and its effect on product safety. In Compliance Magazine 2023, [Online]. Available: https://incompliancemag.com/product-liability-law-and-its-effect-on-product-safety/

Introduction to Validation and Verification in Autonomy

As discussed in the governance module, whatever value products provide to their consumers is weighed against the potential harm caused by the product, and leads to the concept of legal product liability. From a product development perspective, the combination of laws, regulations, legal precedence form the overriding governance framework around which the system specification must be constructed [3]. The process of validation ensures that a product design meets the user's needs and requirements, and verification ensures that the product is built correctly according to design specifications.

Fig. 1. V&V and Governance Framework. The Master V&V(MaVV) process needs to demonstrate that the product has been reasonably tested given the reasonable expectation of causing harm. It does so using three important concepts [4]:

- Operational Design Domain (ODD): This defines the environmental conditions and operational model under which the product is designed to work.

- Coverage: This defines the completeness over the ODD to which the product has been validated.

- Field Response: When failures do occur, the procedures used to correct product design shortcomings to prevent future harm.

As figure 1 shows, the Verification & Validation (V&V) process is the key input into the governance structure which attaches liability, and per the governance structure, each of the elements must show “reasonable due diligence.” An example of unreasonable ODD would be for an autonomous vehicle to give up control a millisecond before an accident.

Mechanically, MaVV is implemented with a Minor V&V (MiVV) process consisting of:

- Test Generation: From the allowed ODD, test scenarios are generated.

- Execution: This test is “executed” on the product under development. Mathematically, a functional transformation which produces results.

- Criteria for Correctness: The results of the execution are evaluated for success or failure with a crisp criteria-for-correctness.

In practice, each of these steps can have quite a bit of complexity and associated cost. Since the ODD can be a very wide state space, intelligently and efficiently generating the stimulus is critical. Typically, in the beginning, stimulus generation is done manually, but this quickly fails the efficiency test in terms of scaling. In virtual execution environments, pseudo-random directed methods are used to accelerate this process. In limited situations, symbolic or formal methods can be used to mathematically carry large state spaces through the whole design execution phase. Symbolic methods have the advantage of completeness but face algorithmic computational explosion issues as many of the operations are NP-Complete algorithms.

The execution stage can be done physically (such as test track above), but this process is expensive, slow, has limited controllability and observability, and in safety critical situations, potentially dangerous. In contrast, virtual methods have the advantage of cost, speed, ultimate controllability and observability, and no safety issues. The virtual methods also have the great advantage of performing the V&V task well before the physical product is constructed. This leads to the classic V chart shown in figure 1. However, since virtual methods are a model of reality, they introduce inaccuracy into the testing domain while physical methods are accurate by definition. Finally, one can intermix virtual and physical methods with concepts such as Software-in-loop or Hardware-in-loop. The observable results of the stimulus generation are captured to determine correctness. Correctness is typically defined by either a golden model or an anti-model. The golden model, typically virtual, offers an independently verified model whose results can be compared to the product under test. Even in this situation, there is typically a divergence between the abstraction level of the golden model and the product which must be managed. Golden model methods are often used in computer architectures (ex ARM, RISCV). The anti-model situation consists of error states which the product cannot enter, and thus the correct behavior is the state space outside of the error states. An example might be in the autonomous vehicle space where an error state might be an accident or violation of any number of other constraints. The MaVV consists of building a database of the various explorations of the ODD state space, and from that building an argument for completeness. The argument typically takes the nature of a probabilistic analysis. After the product is in the field, field returns are diagnosed, and one must always ask the question: Why did not my original process catch this issue? Once found, the test methodology is updated to prevent issues with fixes going forward. The V&V process is critical in building a product which meets customer expectations and documents the need for “reasonable” due diligence for the purposes of product liability in the governance framework.

In most cases, the generic V&V process must grapple with massive ODD spaces, limited execution capacity, and high cost of evaluation. Further, all of this must be done in a timely manner to make the product available to the marketplace. Traditionally, the V&V regimes have been bifurcated into two broad categories: Physics- Based and Decision-Based. We will discuss the key characteristics of each now.

Physics-Based Operating Domains

For MaVV, the critical factors are the efficiency of the MiVV “engine” and the argument for the completeness of the validation. Historically, mechanical/non-digital products (such as cars or airplanes) required sophisticated V&V. These systems were examples of a broader class of products which had a Physics-Based Execution (PBE) paradigm. In this paradigm, the underlying model execution (including real life) has the characteristics of continuity and monotonicity because the model operates in the world of physics. This key insight has enormous implications for V&V because it greatly constrains the potential state-space to be explored. Examples of this reduction of state-space include:

- Scenario Generation: One needs only worry about the state space constrained by the laws of physics. Thus, objects which obey physics cannot exist. Every actor is explicitly constrained by the laws of physics.

- Monotonicity: In many interesting dimensions, there are strong properties of monotonicity. As an example, if one is considering stopping distance for braking, there is a critical speed above which there will be an accident.

Critically, all the speed bins below this critical speed are safe and do not have to be explored. Mechanically, in traditional PBE fields, the philosophy of safety regulation (ISO 26262 [5], AS9100 [6], etc.) builds the safety framework as a process, where

- failure mechanisms are identified;

- a test and safety argument is built to address the failure mechanism;

- there is a safety process by a regulator (or documentation for self-regulation) which evaluates these two and acts as a judge to approve/decline.

Traditionally, faults considered are primarily mechanical failure. As an example, the flow for validating the braking system in an automobile through ISO 26262 would have the following steps:

- Define Safety Goals and Requirements (Concept Phase): Hazard Analysis and Risk Assessment (HARA): Identify potential hazards related to the braking system (e.g., failure to stop the vehicle, unintended braking). Assess risk levels using parameters like severity, exposure, and controllability. Define Automotive Safety Integrity Levels (ASIL) for each hazard (ranging from ASIL A to ASIL D, where D is the most stringent). Define safety goals to mitigate hazards (e.g., ensure sufficient braking under all conditions).

- Develop Functional Safety Concept: Translate safety goals into high-level safety requirements for the braking system. Ensure redundancy, diagnostics, and fail-safe mechanisms are incorporated (e.g., dual-circuit braking or electronic monitoring).

- System Design and Technical Safety Concept: Break down functional safety requirements into technical requirements, design the braking system with safety mechanisms like hardware (e.g., sensors, actuators) and software (e.g., anti-lock braking algorithms). Implement failure detection and mitigation strategies (e.g., failover to mechanical or electronic control paths).

- Hardware and Software Development: Hardware Safety Analysis (HSA): Validate that components meet safety standards (e.g., reliable braking sensors). Software Development and Verification: Use ISO 26262-compliant processes for coding, verification, and validation. Test braking algorithms under various conditions.

- Integration and Testing: Perform verification of individual components and subsystems to ensure they meet technical requirements. Conduct integration testing of the complete braking system, focusing on functional tests (e.g., stopping distance), safety tests (e.g., behavior under fault conditions), and stress/environmental tests (e.g., heat, vibration).

- Validation (Vehicle Level): Validate the braking system against safety goals defined in the concept phase. Perform real-world driving scenarios, edge cases, and fault injection tests to confirm safe operation. Verify compliance with ASIL-specific requirements.

- Production, Operation, and Maintenance: Ensure production aligns with validated designs. Implement operational safety measures (e.g., periodic diagnostics, maintenance), monitor and address safety issues during the product lifecycle (e.g., software updates).

- Confirmation and Audit: Use independent confirmation measures (e.g., safety audits, assessment reviews) to ensure the braking system complies with ISO 26262.

Finally, the regulations have a strong idea of safety levels with Automotive Safety Integrity Levels (ASIL). Airborne systems follow a similar trajectory (pun intended) with the concept of Design Assurance Levels (DALs). A key part of the V&V task is to meet the standards required at each ASIL level. Historically, a sophisticated set of V&V techniques has been developed to verify traditional automotive systems. These techniques included well-structured physical tests, often validated by regulators, or sanctioned independent companies (ex TUV-Sud [7]). Over the years, the use of virtual physics-based models has increased to model design tasks such as body design [8] or tire performance [9]. The general structure of these models is to build a simulation which is predictive of the underlying physics to enable broader ODD exploration. This creates a very important characterization, model generation, predictive execution, and correction flow. Finally, because the execution is highly constrained by physics, virtual simulators can have limited performance and often require extensive hardware support for simulation acceleration. In summary, the key underpinnings of the PBE paradigm from a V&V point of view are:

- Constrained and well-behaved space for scenario test generation.

- Expensive physics-based simulations.

- Regulations focused on mechanical failure.

- In safety situations, regulations focused on a process to demonstrate safety with a key idea of design assurance levels.

Traditional Decision-based Execution

As cyber-physical systems evolved, information technology (IT) rapidly transformed the world.

Fig. 4. Progression of System Specification (HW, SW, AI).

Fig. 4. Progression of System Specification (HW, SW, AI).

As shown in Figure 4, within electronics, there has been a progression of system function construction where the first stage was hardware or pseudo-hardware (FPGA, microcode). The next stage involved the invention of a processor architecture upon which software could imprint system function. Software was a design artifact written by humans in standard languages (C, Python, etc.). The revolutionary aspect of the processor abstraction allowed a shift in function without the need to shift physical assets. However, one needed legions of programmers to build the software. Today, the big breakthrough with Artificial Intelligence (AI) is the ability to build software with the combination of underlying models, data, and metrics.

In their basic form, IT systems were not safety critical, and the similar levels of legal liability have not attached to IT products. However, the size and growth of IT is such that problems in large volume consumer products can have catastrophic economic consequences [10]. Thus, the V&V function was very important. IT systems follow the same generic processes for V&V as outlined above, but with two significant differences around the execution paradigm and source of errors. First, unlike the PBE paradigm, the execution paradigm of IT follows a Decision Based Execution mode (DBE). That is, there are no natural constraints on the functional behavior of the underlying model, and no inherent properties of monotonicity. Thus, the whole massive ODD space must be explored which makes the job of generating tests and demonstrating coverage extremely difficult. To counter this difficulty, a series of processes have been developed to build a more robust V&V structure. These include: 1) Code Coverage: Here, the structural specification of the virtual model is used as a constraint to help drive the test generation process. This is done with software or hardware (RTL code). 2) Structured Testing: A process of component, subsection, and integration testing has been developed to minimize propagation of errors. 3) Design Reviews: Structured design reviews with specs and core are considered best practice.

A good example of this process flow is the CMU Capability Maturity Model Integration (CMMI) [11] which defines a set of processes to deliver quality software. Large parts of the CMMI architecture can be used for AI when AI is replacing existing SW components. Finally, testing in the DBE domain decomposes into the following philosophical categories: “Known knowns:” Bugs or issues that are identified and understood, “Known unknowns” Potential risks or issues that are anticipated but whose exact nature or cause is unclear, and “Unknown unknowns” Completely unanticipated issues that emerge without warning, often highlighting gaps in design, understanding, or testing. The last category being the most problematic and most significant for DBE V&V. Pseudo-random test generation has been a key technique used as a method to expose this category [12]. In summary, the key underpinnings of the DBE paradigm from a V&V point of view are: 1) Unconstrained and not well-behaved execution space for scenario test generation, 2) Generally, less expensive simulation execution (no physical laws to simulate), 3) V&V focused on logical errors not mechanical failure 4) Generally, no defined regulatory process for safety critical applications. Most software is “best efforts,” 5) “Unknown-unknowns” a key focus of validation.

A key implication of the DBE space is that the idea from the PBE world of building a list of faults and building a safety argument for them is antithetical to the focus of DBE validation.

Finally, the product development process is typically focused on defining an ODD and validating against that situation. However, in modern times, an additional concern is that of adversarial attacks (cybersecurity). In this situation, an adversary wants to high jack the system for nefarious intent. In this situation, the product owner must not only validate against the ODD, but also detect when the system is operating outside the ODD. After detection, the best case scenario is to safely redirect the system to the ODD space. The risk associated with cybersecurity issues typically split at three levels for cyber-physical systems:

- OTA Security: If an adversary can take manipulate the Over the Air (OTA) software updates, they can take over mass number of devices quickly. An example worst case situation would be a Tesla OTA which turns Tesla's into collision engines.

- Remote Control Security: If the adversary can take over a car remotely, they can cause harm to the occupants as well as third-parties.

- Sensor Spoofing: In this situation, the adversary uses local physical assets to fool the sensors of the target. GPS jamming or spoofing are active examples.

In terms of governance, some reasonable due-diligence is expected to be provided by the product developer in order to minimize these issues. The level of validation required is dynamic in nature and connected to the norm in the industry.

Validation Requirements across Domains

In terms of domains, the Operational Design Domain (ODD) is the driving factor, and typically have two dimensions. The first is the operational model and the second is the physical domain (ground, airborne, marine, space). In terms of ground, Passenger AVs are perhaps the most well-known face of autonomy, with robo-taxi services and self-driving consumer vehicles gradually entering urban environments. Companies like Waymo, Cruise, and Tesla have taken different approaches to ODDs. Waymo’s fully driverless cars operate in sunny, geo-fenced suburbs of Phoenix with detailed mapping and remote supervision. Cruise began service in San Francisco, originally operating only at night to reduce complexity. Tesla’s Full Self Driving (FSD) Beta aims for broader generalization, but it still relies heavily on driver supervision and is limited by weather and visibility challenges.

Transit shuttles, though less publicized, have quietly become a practical application of AVs in controlled environments. These low-speed vehicles typically operate in geo-fenced areas such as university campuses, airports, or business parks. Companies like Navya, Beep, and EasyMile deploy shuttles that follow fixed routes and schedules, interacting minimally with complex traffic scenarios. Their ODDs are tightly defined: they may not operate in rain or snow, often run only during daylight, and avoid high-speed or mixed-traffic conditions. In many cases, a remote operator monitors operations or is available to intervene if needed. Delivery robots represent a third class of autonomous mobility—compact, lightweight vehicles designed for last-mile delivery. Their ODDs are perhaps the narrowest, but that’s by design. These robots, from companies like Starship, Kiwibot, and Nuro, navigate sidewalks, crosswalks, and short street segments in suburban or campus environments. They operate at pedestrian speeds (typically under 10 mph), carry small payloads, and avoid extreme weather, high traffic, or unstructured terrain. Because they don’t carry passengers, safety thresholds and regulatory oversight can differ significantly.

Weather is a particularly limiting factor across all autonomous systems. Rain, snow, fog, and glare interfere with LIDAR, radar, and camera performance—especially for smaller robots that operate close to the ground. Most AV deployments today restrict operations to fair-weather conditions. This is especially true for delivery robots and transit shuttles, which often halt operations during storms. While advanced sensor fusion and predictive modeling promise improvements, true all-weather autonomy remains a significant technical challenge. The intersection of weather and autonomy is an active research area [1]

Another ODD dimension is time of day. Nighttime operation brings unique difficulties for AVs: reduced visibility, increased pedestrian unpredictability, and in urban areas, more erratic driver behavior. Some systems (like Waymo in Chandler, AZ) now operate 24/7, but most deployments—particularly delivery robots and shuttles—remain restricted to daylight hours. Tesla's FSD does operate at night, but it still requires human oversight. Infrastructure also shapes ODDs in crucial ways. Many AV systems depend on high-definition maps, lane-level GPS, and even smart traffic signals to guide their decisions. In geo-fenced environments—where the route and surroundings are highly predictable—this infrastructure dependency is manageable. But for broader ODDs, where environments may change frequently or lack digital maps, achieving safe autonomy becomes much harder. That’s why passenger AVs today generally avoid rural areas, unpaved roads, or newly constructed zones.

Regulatory environments further shape ODDs. In the U.S., states like California, Arizona, and Florida have developed AV testing frameworks, but each differs in what it permits. For instance, California limits fully driverless vehicles to certain urban zones with strict reporting requirements. Delivery robots are often regulated at the city level—some cities allow sidewalk bots, others ban them outright. Transit shuttles often receive special permits for low-speed operation on limited routes. These regulatory boundaries translate directly into ODD constraints.

In terms of physical domains, Ground-based autonomous systems, especially in automotive contexts, are the most commercially visible. Self-driving vehicles operate in human-dense environments, requiring perception systems to identify pedestrians, cyclists, vehicles, and traffic infrastructure. Validation here relies heavily on scenario-based testing, simulation, and controlled pilot deployments. Standards like ISO 26262 (functional safety), ISO/PAS 21448 (SOTIF), and UL 4600 (autonomy system safety) guide safety assurance. Regulatory frameworks are evolving state-by-state or country-by-country, with Operational Design Domain (ODD) restrictions acting as practical constraints on deployment.

Autonomous aircraft (e.g., drones, urban air mobility platforms, and optionally piloted systems) must operate in highly structured, safety-critical environments. Validation involves rigorous formal methods, fault tolerance analysis, and conformance with aviation safety standards such as DO-178C (software), DO-254 (hardware), and emerging guidance like ASTM F38 and EASA's SC-VTOL. Airspace governance is centralized and mature, often requiring type certification and airworthiness approvals. Unlike automotive systems, airborne autonomy must prove reliability in loss-of-link scenarios and demonstrate fail-operational capabilities across flight phases.

Autonomous surface and underwater marine systems face unstructured and communication-constrained environments. They must operate reliably in GPS-denied or RF-blocked conditions while detecting obstacles like buoys, vessels, or underwater terrain. Validation is more empirical, often involving extended sea trials, redundancy in navigation systems, and adaptive mission planning. IMO (International Maritime Organization) and classification societies like DNV are working on Maritime Autonomous Surface Ship (MASS) regulatory frameworks, though global standards are still nascent. The dual-use nature of marine autonomy (civil and defense) adds governance complexity. Space-based autonomous systems (e.g., planetary rovers, autonomous docking spacecraft, and space tugs) operate under extreme constraints: communication delays, radiation exposure, and no real-time human oversight. Validation occurs through rigorous testing on Earth-based analog environments, formal verification of critical software, and fail-safe design principles. Governance falls under national space agencies (e.g., NASA, ESA) and international frameworks like the Outer Space Treaty. Assurance relies on mission-specific autonomy envelopes and pre-defined decision trees rather than reactive autonomy.

Governance also differs. Aviation and space operate within centralized, internationally coordinated regulatory systems (ICAO, FAA, EASA, NASA), while ground autonomy remains highly fragmented across jurisdictions. Maritime governance is progressing but lacks harmonization. Space governance, although anchored in treaties, increasingly contends with commercial activity and national interests, demanding updated risk management protocols.

Emerging efforts like the SAE G-34/SC-21 standard for AI in aviation, NASA's exploration of adaptive autonomy, and ISO’s work on AI functional safety indicate a trend toward domain-agnostic principles for validating intelligent behavior. There is growing recognition that autonomous systems, regardless of environment, need rigorous testing of edge cases, clarity of system intent, and real-time assurance mechanisms.

Ref:

[1] Vargas, J.; Alsweiss, S.; Toker, O.; Razdan, R.; Santos, J. An Overview of Autonomous Vehicles Sensors and Their Vulnerability to Weather Conditions. Sensors 2021, 21, 5397. https://doi.org/10.3390/s21165397

Summary

This chapter has provided an overviews of autonomous systems (ground, airborne, marine, space), the initial framing of expectation functions for autonomy, the governance structures into which autonomy must operate, an overview of the validation and verification mechanisms used to support these governance structures, and finally an overview of autonomy in each of the physical domains.

In the subsequent chapters, we will delve deeper into these topics with a framing informed by autonomy abstractions as shown in the figure below. At the “bottom” of these abstractions are the physical objects such as the mechanical devices and the associated electronics hardware. Layered above the electronics hardware layer are various software layers which start with middleware/infrastructure, algorithmic layers, and finally the connection to humans.

These topics will be addressed at the conceptual level and also examined in specific fashion for the four physical domains (example figure below).

Productization Lessons and Assessments:

Key lessons for productization include:

- Engineers must understand their products operate inside a governance structure consisting of laws, regulations, and standards.

- In the case of autonomy, there are many historical standards, but standard development is also under development.

- A very key aspect of product design is the expectation function for the product. This expectation function is key to communication from a marketing perspective and also from a legal liability perspective.

| Domain | Primary Standards Body | Key Autonomy Standard |

|---|---|---|

| Ground | SAE | SAE J3016 |

| Ground | ISO | ISO 26262, ISO 21448 |

| Ground | UNECE | UN R157 |

| Airborne | RTCA | DO-178C, DO-365 |

| Airborne | FAA/EASA | UAV autonomy certification |

| Marine | IMO | MASS autonomy levels |

| Marine | DNV | Autonomous ship standards |

| Space | NASA | ALFUS autonomy framework |

| Space | CCSDS | Spacecraft autonomy protocols |

| Cross-domain | IEEE | IEEE 7000 series |

| Cross-domain | IEC | IEC 61508 |

| Cross-domain | NIST | AI Risk Management Framework |

Industries and Companies:

| Type | Description | Example Players (Companies / Organizations) |

|---|---|---|

| Regulators & Government Agencies | Define laws, certification pathways, and operational constraints for autonomous systems across domains (ground, air, marine, space). They translate legislation into enforceable rules and approvals. | NHTSA, FAA, EASA, International Maritime Organization, NASA, ESA |

| Standards Organizations / Industry Consortia | Develop technical standards, safety frameworks, and autonomy classification systems that regulators and industry rely on (e.g., SAE levels, ISO safety standards). | SAE International, ISO, IEEE, RTCA, ASTM |

| Legal & Advisory Firms | Interpret liability, compliance, and regulatory frameworks; support litigation, risk assessment, and policy strategy for autonomy deployments. | Baker McKenzie, DLA Piper, Latham & Watkins |

| Certification & Testing Authorities | Provide independent validation, certification audits, and compliance verification against safety standards (ASIL, DAL, etc.). Critical for market entry. | TÜV SÜD, UL Solutions, DNV |

| Simulation & Digital Twin Software Providers | Provide tools for scenario-based validation, digital twins, and V&V workflows across autonomy stacks (SIL/HIL, scenario generation, formal testing). | NVIDIA (DRIVE Sim), MathWorks, Ansys, Siemens |

| Test Track & Physical Testing Infrastructure Providers | Operate controlled environments for real-world validation (proving grounds, UAV corridors, maritime test ranges). Bridge sim-to-real validation. | American Center for Mobility, MCity, FAA UAV Test Sites |

Hardware and Sensing Technologies

The underlying active physical components for all electronic systems are semiconductors. Semiconductors span several major categories based on function, material system, and integration level. At the most basic level are discrete devices such as diodes, MOSFETs, IGBTs, and rectifiers, which control current and voltage and are widely used in power conversion and motor drives. Analog and mixed-signal semiconductors handle sensing, amplification, signal conditioning, and power management (e.g., ADCs, DACs, voltage regulators, sensor interfaces). Memory semiconductors—such as DRAM, SRAM, NAND flash, and emerging non-volatile memories like MRAM—store data and program code. Power semiconductors use materials such as silicon, silicon carbide (SiC), and gallium nitride (GaN) to efficiently switch high voltages and currents in electric vehicles, aircraft power systems, and renewable energy converters. Finally, specialized devices such as RF front-end chips, image sensors (CMOS), FPGAs, and AI accelerators support communication, perception, and high-performance computing tasks. Together, these categories form the layered semiconductor ecosystem that underpins modern automotive, airborne, marine, and space electronic architectures. An important category is digital logic devices include microcontrollers (MCUs), microprocessors (MPUs), and system-on-chip (SoC) devices that execute programming of some form (FPGA, Software, AI). We shall discuss this in greater detail in the next chapter on software.

In this chapter, we shall review historical background to the absorption of semiconductors in various mobility domains. As a part of this background, we shall outline some key “productization” challenges such as safety, governance, and supply chain management. With this background, we will introduce the jump in complexity introduced by autonomy and revisit the key “productization” challenges.

Historical Background

Historically, cyber-physical systems were mechanically based, but with the advent of modern electronics, critical functions moved rapidly to electronics subsystems. For example, automotive electronics in the 1970s and early 1980s, tightening emissions standards in the U.S., Europe, and Japan pushed automakers to adopt microprocessor-based engine control units (ECUs). What began as simple ignition timing modules evolved into closed-loop engine management systems handling fuel injection and knock control— “Power Train” block shown in the graphic. These early semiconductor deployments were ruggedized analog/mixed-signal designs, optimized for reliability in high-temperature environments rather than computational complexity.

Through the late 1980s and 1990s, electronics expanded from powertrain into chassis and safety systems. Anti-lock braking systems (ABS), electronic stability control, traction control, and eventually electric power steering (EPS) required real-time sensing and actuation. This corresponds to the “Chassis” and “Safety and Control” domains in the image (ABS, airbag controllers, TPMS, collision warning). Here, semiconductors enabled distributed sensing (wheel speed sensors, accelerometers, pressure sensors) and deterministic embedded processing. The architecture remained domain-centric: each function had its own ECU, with limited cross-domain integration. The next wave, roughly 1995–2010, was driven less by regulation and more by consumer expectation. Vehicles became platforms for infotainment and comfort electronics, shown in the graphic’s “Infotainment” and “Comfort and Control” sections (dashboard displays, navigation, climate control, seat modules, body electronics). This phase marked the introduction of higher-performance digital SoCs, memory subsystems, and human-machine interface processors. Importantly, this is when in-vehicle networking standards such as CAN, LIN, and later FlexRay (listed under “Networking” ) became essential. The car shifted from isolated ECUs to a distributed electronic architecture connected by data buses—semiconductors were no longer just controllers; they were nodes in a communication network.

Figure 1: Automobile electronics

By the 2010s, semiconductor content per vehicle had grown exponentially, especially with hybrid and electric vehicles Power electronics (IGBTs, MOSFETs, later SiC devices), battery management systems, and high-voltage control loops dramatically increased the role of advanced semiconductor materials and mixed-signal integration. Simultaneously, advanced driver assistance systems (ADAS)—collision warning, parking assist, night vision—required vision processors, radar front-ends, and sensor fusion chips, extending the “Safety and Control” block into high-performance computing territory.

Airborne Sector

If the automotive graphic represents the distributed, domain-based maturation of electronics in cars, the airborne sector followed a similar—but more safety-critical and certification-driven—trajectory. In the early jet age (1950s–1970s), aircraft electronics—then called avionics—were largely analog and federated. Radar, navigation, flight instruments, engine monitoring, and autopilot systems were separate boxes with limited interconnection. Semiconductors initially replaced vacuum tubes for reliability and weight reduction, but computational capability was modest. Much like early automotive engine controllers, electronics were introduced to solve specific operational needs—navigation accuracy, radio communication, and flight stabilization—rather than to create an integrated digital platform. The major inflection point came in the 1980s and 1990s with the rise of digital flight control and “fly-by-wire” architectures, pioneered in civil aviation by aircraft such as the Airbus A320 and expanded in military platforms like the F-16 Fighting Falcon. Here, semiconductors moved from advisory roles to safety-critical control loops. Digital signal processors and radiation-tolerant microcontrollers executed deterministic real-time algorithms for stability augmentation, envelope protection, and engine control (FADEC).

During the 1990s–2000s, avionics entered a “glass cockpit” era. Aircraft such as the Boeing 777 replaced analog gauges with integrated digital displays driven by high-reliability processors and graphics subsystems. Data buses such as ARINC 429 and later AFDX (ARINC 664) enabled deterministic networking between flight computers, sensors, and displays—analogous to CAN and FlexRay in the automotive diagram. However, unlike automotive networks, airborne data buses were built around strict partitioning, redundancy, and fault containment regions. Triple-modular redundancy and dissimilar processors became common for flight-critical functions. In propulsion and power systems, semiconductors expanded from monitoring to active control. Full Authority Digital Engine Control (FADEC) units used mixed-signal ASICs and microprocessors to optimize fuel flow, reduce emissions, and improve reliability. With the emergence of “more-electric aircraft” concepts—exemplified by the Boeing 787—power electronics content increased substantially. High-voltage converters, motor drives, and solid-state power controllers replaced hydraulic subsystems, mirroring (though earlier in safety rigor) the electrification wave seen in automotive HEV/EV platforms.

Marine Sector

The marine industry’s use of electronics evolved from isolated navigation aids to highly integrated digital ship systems, following a trajectory structurally similar to automotive but at much larger power scales and with longer asset lifecycles. In the 1950s through the 1970s, marine electronics were primarily analog and functionally segregated: radar, sonar, gyrocompasses, VHF radios, and basic autopilots operated as standalone systems. Early semiconductor adoption focused on improving reliability and reducing size, particularly in radar and communication equipment. These systems were advisory in nature; propulsion and steering remained largely mechanical or hydraulic. The first major digital transition occurred in the 1980s and 1990s with the arrival of microprocessor-based engine control, satellite navigation (GPS), and electronic charting systems. Ships began incorporating digital propulsion governors, fuel optimization systems, and centralized alarm monitoring. This period resembles the automotive shift from carburetors to engine control units and ABS systems. Importantly, networking standards such as NMEA 0183 and later NMEA 2000 allowed sensors and navigation systems to exchange data, marking the move from isolated instrumentation to distributed marine electronics architectures.

By the 2000s, large commercial and naval vessels adopted Integrated Bridge Systems (IBS) and Integrated Platform Management Systems (IPMS), consolidating radar, charting, sonar, propulsion status, and safety alerts into unified digital consoles. Power electronics content increased significantly with electric propulsion drives, thruster control, hybrid marine power systems, and dynamic positioning systems. This phase mirrors the automotive expansion into electrification and body-domain integration. In recent years, semiconductor density has grown further with sensor fusion for collision avoidance, remote fleet monitoring, predictive maintenance, and early-stage autonomous surface vessels. While regulatory frameworks remain conservative, marine architecture now consists of interconnected propulsion, navigation, safety, power distribution, and autonomy subsystems — conceptually analogous to the domain blocks in the automotive graphic.

Space Sector

The space sector followed a parallel but more reliability-driven evolution, shaped by radiation tolerance, extreme environments, and mission assurance requirements. In the early space age, spacecraft electronics were built from discrete logic and radiation-hardened components with very limited computational capacity. Systems were strictly federated: guidance, telemetry, power conditioning, communications, and thermal control were separate subsystems with built-in redundancy. Early digital computers such as those used in the Apollo Guidance Computer demonstrated that semiconductors could enable autonomous navigation, but computational margins were minimal and fault tolerance was paramount. During the 1990s and early 2000s, radiation-hardened microprocessors and standardized spacecraft data buses such as MIL-STD-1553 and SpaceWire enabled more modular digital architecture. Satellites adopted structured subsystems for attitude determination and control, onboard data handling, payload processing, and power regulation. Missions like the Hubble Space Telescope and deep-space platforms such as the Mars Reconnaissance Orbiter incorporated increasingly sophisticated onboard processing for navigation, instrument control, and fault management. This stage resembles the distributed ECU era in automotive, where each domain was digitally controlled but interconnected via deterministic buses. In the modern era, semiconductor capability in space systems has expanded dramatically. High-throughput communications satellites, FPGA-based reconfigurable payloads, advanced solid-state power controllers, electric propulsion systems, and autonomous fault detection algorithms define current architectures. Commercial constellations developed by companies such as SpaceX have introduced vertically integrated avionics stacks and more software-defined spacecraft platforms. Unlike automotive, however, semiconductor design in space prioritizes radiation hardening, redundancy, and long-duration reliability over cost optimization. The overall trajectory mirrors the automotive diagram’s layered growth: from instrumentation digitization to closed-loop control, to networked subsystems, and now toward increasingly autonomous, software-defined space platforms.

Across marine and space domains — as in automotive — semiconductor adoption progressed from monitoring to control, from isolated subsystems to networked architecture, and from mechanical dominance to electrically and computationally mediated platforms. The architectural blocks differ in naming (propulsion, navigation, attitude control, power conditioning), but structurally they represent the same historical layering visible in the automotive figure.

Governance and Safety

As semiconductor content in vehicles increased, automotive safety protocols evolved from informal engineering practices to highly structured, lifecycle-based governance frameworks that now extend down to silicon IP and AI behavior. In the 1980s and 1990s, when electronic systems such as ABS and airbag controllers first became widespread, safety assurance was largely handled through company-specific processes. OEMs and Tier-1 suppliers relied on internal FMEA methods, redundancy design practices, and in some cases adaptations of aerospace guidance like DO-178 concepts. There was no unified automotive electronic safety standard, even as vehicles transitioned from isolated ECUs to increasingly networked systems.

The first major formal framework influencing automotive electronics was IEC 61508, published in 1998. IEC 61508 introduced Safety Integrity Levels (SILs), lifecycle safety management, probabilistic hardware fault metrics, and the concept of a structured safety case. However, it was designed as a generic standard for industrial programmable electronic systems. As vehicle architectures became more distributed and semiconductor complexity grew—moving from simple microcontrollers to multi-domain ECUs connected via CAN—automotive stakeholders recognized the need for a sector-specific adaptation.

That led to the publication of ISO 26262 in 2011. ISO 26262 was a transformative step, introducing Automotive Safety Integrity Levels (ASIL A–D), formal Hazard Analysis and Risk Assessment (HARA), hardware architectural metrics such as Single Point Fault Metric (SPFM) and Latent Fault Metric (LFM), and strict requirements traceability across the development lifecycle. Importantly, ISO 26262 directly influenced semiconductor design. Silicon vendors began offering ASIL-ready microcontrollers with lockstep CPU cores, ECC-protected memory, watchdog timers, and documented FMEDA data to support system integrators. Safety moved from being a vehicle-level validation exercise to being embedded in chip architecture and development processes.

The historical progression of safety protocols in airborne systems reflects the increasing reliance on semiconductors in avionics, flight control, and mission-critical software. Unlike automotive, aviation adopted structured safety governance very early, because electronics entered directly into safety-critical control loops such as autopilot and fly-by-wire. Also, increasing integration of custom ASICs and programmable logic devices in avionics led to the publication of DO-254 in 2000. DO-254 formalized design assurance for airborne electronic hardware, including FPGAs and complex microcircuits. It required documented development lifecycles, verification rigor proportional to hardware design assurance levels, and traceability from requirements to implementation.

For marine systems, as digital navigation and propulsion control systems expanded in the 1980s and 1990s, regulatory attention shifted toward reliability and redundancy of electronic systems. Classification societies such as DNV, Lloyd's Register, and American Bureau of Shipping developed rules for electrical and control systems onboard ships. These rules require redundancy in steering and propulsion control, fault tolerance in dynamic positioning systems, and environmental qualification of electronics for vibration, humidity, and salt exposure. The introduction of the Global Maritime Distress and Safety System (GMDSS) in the 1990s marked a major digital milestone. Satellite communications, automated distress signaling, and integrated bridge systems increased semiconductor density. As ships adopted Integrated Bridge Systems (IBS) and Integrated Platform Management Systems (IPMS), classification societies began issuing more formal guidance on software quality, failure mode analysis, and cyber resilience. Still, marine governance remained largely prescriptive and performance-based, rather than process-assurance-based.

Finally, space safety and electronics assurance evolved under extreme reliability constraints from the beginning, due to the impossibility of repair and the high cost of mission failure. Early space programs operated under agency-specific reliability and redundancy doctrines rather than formalized software standards. NASA and defense space agencies emphasized radiation hardening, hardware redundancy, and conservative design margins. Spacecraft have used fault detection, isolation, and recovery (FDIR) techniques from the outset.

Overall, safety standards have tracked the increased consumption of electronic systems.

Conventional Validation and Verification

As discussed in chapter 2, all of these systems live under a governance structure where validation and verification technology links the technical world to the governance structure. Critical in enabling these processes is the domain of Electronic Design Automation (EDA). EDA refers to the software tools and workflows used to design, verify, and prepare semiconductor devices and electronic systems for manufacturing. At the chip level, the flow typically begins with system architecture and specification, followed by separate but converging analog and digital design streams. In digital design, engineers describe functionality using hardware description languages (HDLs) such as Verilog or VHDL, simulate for functional correctness, synthesize to logic gates, and perform place-and-route to create a physical layout. This is followed by static timing analysis, power analysis, signal integrity checks, and increasingly, formal verification and functional safety validation (e.g., ISO 26262 contexts). In analog/mixed-signal design, the flow is more device- and layout-centric: schematic capture, SPICE-level simulation (corner, Monte Carlo, noise, mismatch), layout with careful parasitic extraction, and iterative verification (LVS/DRC). At advanced nodes, the boundary between analog and digital blurs in mixed-signal SoCs, requiring tight co-simulation and cross-domain verification.

Once the silicon design is complete, the flow extends to package design, which has become increasingly critical in advanced-node and heterogeneous integration contexts (e.g., chiplets, 2.5D/3D integration). Package EDA tools model signal integrity, power integrity, thermal behavior, and mechanical stress across substrates, interposers, and bumps. The package is no longer a passive carrier; it is an electrical extension of the die, affecting timing closure, power delivery, and high-speed interfaces (e.g., UCIe, HBM). Finally, at the PCB level, board design tools integrate schematic capture, component placement, routing, and multi-physics analysis (signal integrity, EMI/EMC, thermal). High-speed digital systems require co-design between chip I/O, package escape routing, and PCB stackup to maintain impedance control and timing margins. Modern EDA workflows increasingly emphasize cross-domain co-design—from transistor to board—because performance, reliability, and safety are emergent properties of the entire electronic system, not just the silicon alone.